VANDAL’s Sea Turtle Film Would Have Been “Impossible” Without AI

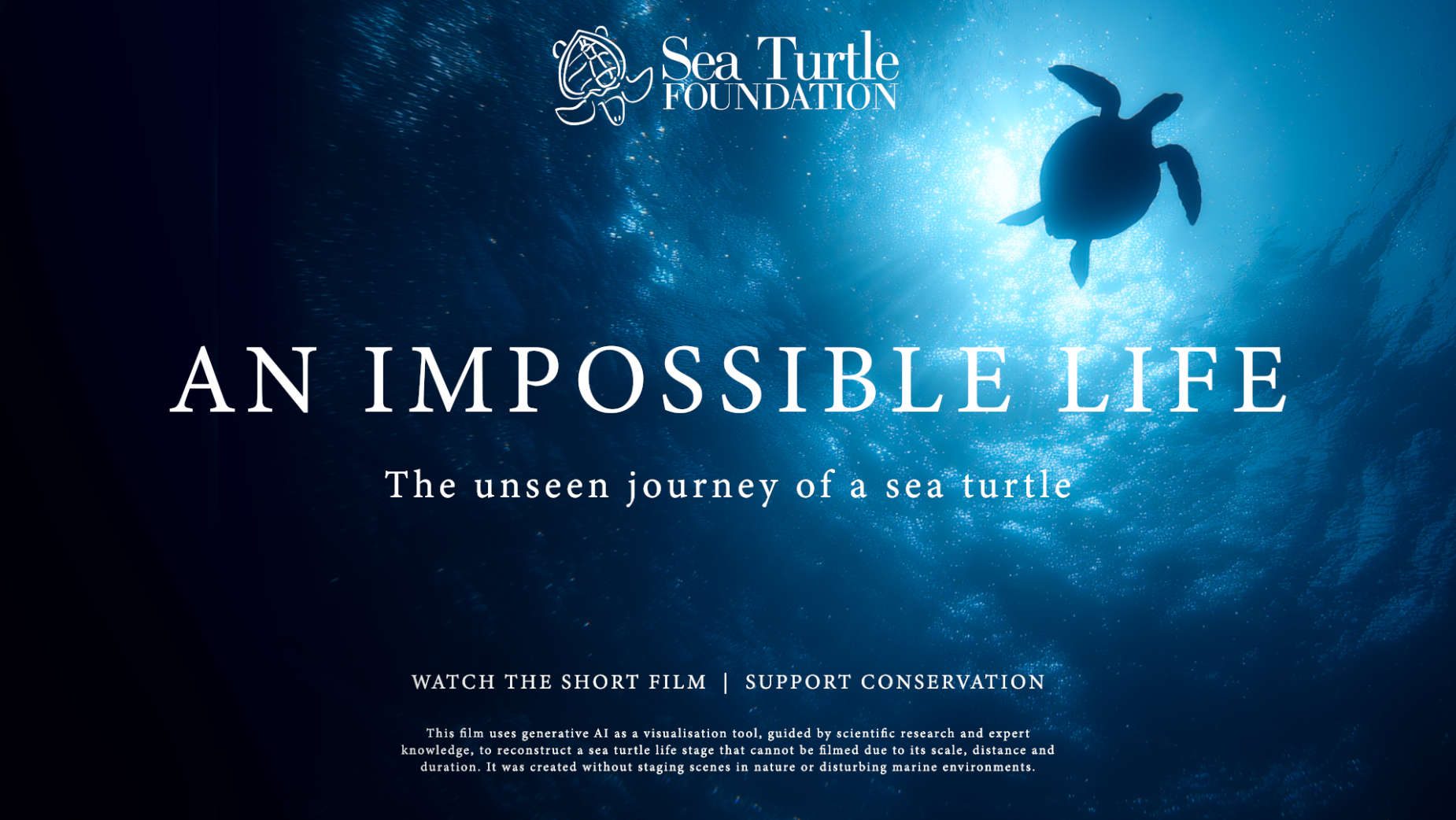

The Sea Turtle Foundation has launched a new conservation short film, ‘An Impossible Life’, created in close collaboration with Sydney creative studio VANDAL and produced entirely using generative AI tools.

Following the life of a single turtle from hatchling to adulthood, the film reconstructs what marine scientists call ‘the lost years’ of sea turtles: the decades spent at sea between leaving the beach as a hatchling and returning to nest.

Though research paints a clear picture of this time-period -- the film confirms only 1 in 1,000 turtles make it to adulthood -- ‘An Impossible Life’ writer and director Chris Scott explained to LBB that direct visual documentation is near non-existent due to the ocean’s scale, and time constraints.

“This life stage of sea turtles has never been filmed even by the BBC or by Blue Planet because of the vast timeframes and the scale of the ocean,” Chris said.

“There is no practical way to follow an individual turtle across thousands of kilometres and decades of open ocean. The life exists, but the images don’t.”

By visualising the largely unseen lives of sea turtles, the film aims to make the existing scientific understanding more accessible to wider audiences, contextualise the risks to the animals, and promote sustained protection efforts, all without disturbing wildlife or marine environments.

Developed first as a passion project, Chris shared an early cut with Sea Turtle Foundation chair Scott Machin, who presented it to the foundation’s board and scientific advisory group.

“Generative AI wasn’t a tool we had previously considered, but, in this context, it offered a way to accurately and responsibly shine a light on a part of a sea turtle’s life that has never been documented in detail on film,” Scott said.

“It allowed a story spanning 20–30 years to be told without disturbing wildlife or the environments we’re working to protect.”

From there, the film developed through ongoing collaboration with the foundation’s team of marine scientists, led by Jennie Gilbert.

“What’s important about this project is that it doesn’t speculate wildly or sensationalise,” Jennie said.

“Every scene is grounded in real science and expert understanding. The use of reconstruction allows us to show what we know to be true, without interfering with animals or fragile ecosystems.”

The work isn’t VANDAL’s first foray into AI: the studio has developed traditional and experiential work using AI tools for the last four years, including for the City of Sydney’s New Year’s Eve displays.

This time, though, it chose to accompany the launch with an AI ‘Ethics and Responsible Use’ statement explaining how the technology was employed.

“We’re really transparent about the use of artificial intelligence,” Chris said. “Being able to visualise this life stage of sea turtles … there are a lot of reasons why this has never been documented.”

To Chris, the film is a “genuinely new and meaningful” use of generative AI in storytelling.

“We see this as an important contribution to the broader AI conversation. The intent wasn’t to replace documentary practice, but to visualise a reality that can’t be accessed without disturbing wildlife or ecosystems.

“AI is [seen as] the easy thing to do, because it allows you to do something cheaply, quickly. We wanted to be really, really certain that we communicated that we were using AI in this case to bring to life a life stage of a sea turtle over a frame that would be impossible to shoot.

“The type of stories [the conservation community] is interested in aren't just quick flashes in the pan.”

The statement declares the use of artificial intelligence as a “visualisation tool”, not a substitute for science, evidence, or observation.

“Attempting to film this life stage directly would require decades-long tracking of individual animals; repeated deployment of vessels, crews, and equipment across remote ocean environments over extended periods of time; [and] extensive use of tagging, lighting, or intervention in sensitive habitats,” the statement reads.

“Such approaches would carry significant ecological disturbance, risk to wildlife, and a substantial environmental footprint -- while still failing to capture the full life stage in any coherent way.

“Generative AI was chosen specifically because it enables visual reconstruction without intrusion: allowing this story to be told without placing cameras, crews or equipment into fragile marine environments, and without staging or manipulating wildlife behaviour.

“All environments, behaviours and interactions depicted were informed by existing scientific knowledge and expert consultation. The process prioritised ecological plausibility, avoiding speculative imagery.”

Every sequence, Chris said, needed to feel right on screen and align with the science.

Chris consciously avoided open-ended generation or uncontrolled world-building during production, instead using a custom GPT to maintain consistency in lens language, camera behaviour, colour response, depth, and pacing.

“Once those parameters were stable, the system could generate detailed Midjourney prompts that stayed within a defined visual logic.”

The soft, droning soundtrack gives the film an integral “sense of emotional weight”, Chris said, an important balance for a project preoccupied with scientific and ecological accuracy.

“Sound was the opportunity to add a bit of emotional gravitas to the piece. Ultimately, it's a conservation piece … to improve conservation outcomes, you need the public emotionally invested in the story.”

The film’s launch coincides with Australia’s sea turtle hatching season.